UIMUIM

UIM | Zeta - Math JournalUIM | Zeta - Math JournalThis study systematically evaluates the machine learning robustness of Multi-Layer Perceptrons (MLPs) and Logistic Regression (LR) models against data perturbations using the MNIST handwritten digit dataset. Despite their foundational roles in machine learning, the comparative resilience of MLPs and LR to diverse perturbations—such as noise, geometric distortions, and adversarial attacks—remains underexplored. This gap is critical, as real-world applications (e.g., healthcare, autonomous systems) often operate with imperfect data, yet practitioners lack actionable insights into model selection under such conditions. Existing studies predominantly focus on complex deep networks or isolated perturbation types, overlooking simpler models like LR and holistic evaluations. To address this, we test three perturbation categories: Gaussian noise (𝜎 = 0.1 to 1.0), salt-and-pepper noise (𝑝 = 0.1 to 0.5), rotational distortions (5∘ to 30∘), and adversarial attacks (FGSM with 𝜖 = 0.05 to 0.30). Both models were trained on 60,000 MNIST samples and tested on 10,000 perturbed images. Results demonstrate that MLPs exhibit superior robustness under moderate noise and rotations, achieving baseline accuracies of 97.07% (vs. LRs 92.63%). For Gaussian noise (𝜎 = 0.5), MLP retained 35.35% accuracy compared to LRs 23.91%. However, adversarial attacks (FGSM, 𝜖 = 0.30) reduced MLP accuracy to 0.20%, revealing critical vulnerabilities. Statistical analysis (paired t-tests, (𝑝 < 0.05)) confirmed significant performance differences across perturbation levels. A linear regression (𝑅2 = 0.98) further quantified MLPs predictable accuracy decline with Gaussian noise intensity. These findings underscore the necessity of robustness-aware model selection in noise-prone environments and highlight urgent needs for adversarial defense mechanisms in MLPs. Practitioners are advised to prioritize MLPs for tasks with moderate distortions, while future work should integrate robustness enhancements like adversarial training.

This study demonstrates that Multi-Layer Perceptrons (MLPs) generally exhibit higher robustness compared to Logistic Regression (LR) under moderate noise and rotational distortions.However, both models are vulnerable to extreme perturbations, with performance collapsing at high noise levels or significant rotations.The research highlights the critical need for adversarial defense mechanisms in MLPs, as they are highly susceptible to adversarial attacks despite their robustness to random noise.These findings provide practical guidance for model selection in noise-prone environments and emphasize the importance of integrating robustness enhancements into machine learning workflows.

Penelitian lebih lanjut dapat dilakukan untuk mengeksplorasi arsitektur hibrida yang menggabungkan keunggulan MLPs dalam menangani noise moderat dengan mekanisme pertahanan terhadap serangan adversarial. Selain itu, penting untuk menyelidiki metode peningkatan robustas seperti adversarial training dan input preprocessing yang dapat diterapkan pada MLPs untuk meningkatkan ketahanannya terhadap berbagai jenis gangguan. Studi komparatif yang lebih luas juga diperlukan untuk mengevaluasi kinerja model-model machine learning yang berbeda pada dataset yang lebih kompleks dan representatif, serta untuk mengidentifikasi faktor-faktor kunci yang mempengaruhi robustas model dalam skenario dunia nyata. Penelitian ini dapat membantu mengembangkan model machine learning yang lebih andal dan aman untuk digunakan dalam berbagai aplikasi kritis, seperti sistem otonom dan diagnosis medis.

- ZOO | Proceedings of the 10th ACM Workshop on Artificial Intelligence and Security. zoo proceedings 10th... doi.org/10.1145/3128572.3140448ZOO Proceedings of the 10th ACM Workshop on Artificial Intelligence and Security zoo proceedings 10th doi 10 1145 3128572 3140448

- A Robustness Study of Multi-Layer Perceptrons and Logistic Regression to Data Perturbation: MNIST Dataset... doi.org/10.31102/zeta.2025.10.1.39-50A Robustness Study of Multi Layer Perceptrons and Logistic Regression to Data Perturbation MNIST Dataset doi 10 31102 zeta 2025 10 1 39 50

- Adversarial attacks on machine learning systems for high-frequency trading | Proceedings of the Second... dl.acm.org/doi/10.1145/3490354.3494367Adversarial attacks on machine learning systems for high frequency trading Proceedings of the Second dl acm doi 10 1145 3490354 3494367

| File size | 763.22 KB |

| Pages | 12 |

| DMCA | Report |

Related /

UNRAMUNRAM Hasil kegiatan menunjukkan bahwa pagar pengaman berhasil terpasang sesuai rencana, dengan fungsi utama sebagai pembatas fisik yang mengurangi akses orangHasil kegiatan menunjukkan bahwa pagar pengaman berhasil terpasang sesuai rencana, dengan fungsi utama sebagai pembatas fisik yang mengurangi akses orang

MES BOGORMES BOGOR Penelitian menggunakan desain deskriptif korelatif dengan pendekatan cross-sectioanal. Penelitian dilakukan pada 50 lansia berusia ≥60 tahun yang dipilihPenelitian menggunakan desain deskriptif korelatif dengan pendekatan cross-sectioanal. Penelitian dilakukan pada 50 lansia berusia ≥60 tahun yang dipilih

LOCUSMEDIALOCUSMEDIA Dengan pendekatan yuridis normatif, ditemukan bahwa reformasi HAN tidak cukup dilakukan secara normatif, namun perlu restrukturisasi kelembagaan, penguatanDengan pendekatan yuridis normatif, ditemukan bahwa reformasi HAN tidak cukup dilakukan secara normatif, namun perlu restrukturisasi kelembagaan, penguatan

SARI MUTIARASARI MUTIARA Keragaman pangan mencerminkan tingkat kecukupan gizi seseorang. Rendahnya pola asuh menyebabkan buruknya status gizi balita. Tujuan kegiatan ini adalahKeragaman pangan mencerminkan tingkat kecukupan gizi seseorang. Rendahnya pola asuh menyebabkan buruknya status gizi balita. Tujuan kegiatan ini adalah

LAAROIBALAAROIBA Sejalan dengan hal tersebut, strategi revenge tourism dapat dijadikan acuan untuk mengembalikan semangat pariwisata ke Gunung Bromo pasca kebakaran. StrategiSejalan dengan hal tersebut, strategi revenge tourism dapat dijadikan acuan untuk mengembalikan semangat pariwisata ke Gunung Bromo pasca kebakaran. Strategi

UBTUBT Kegiatan pengabdian masyarakat dilakukan dengan metode presentasi/ceramah, presentasi dilakukan selama kurang lebih 30 menit. Peserta diberikan informasiKegiatan pengabdian masyarakat dilakukan dengan metode presentasi/ceramah, presentasi dilakukan selama kurang lebih 30 menit. Peserta diberikan informasi

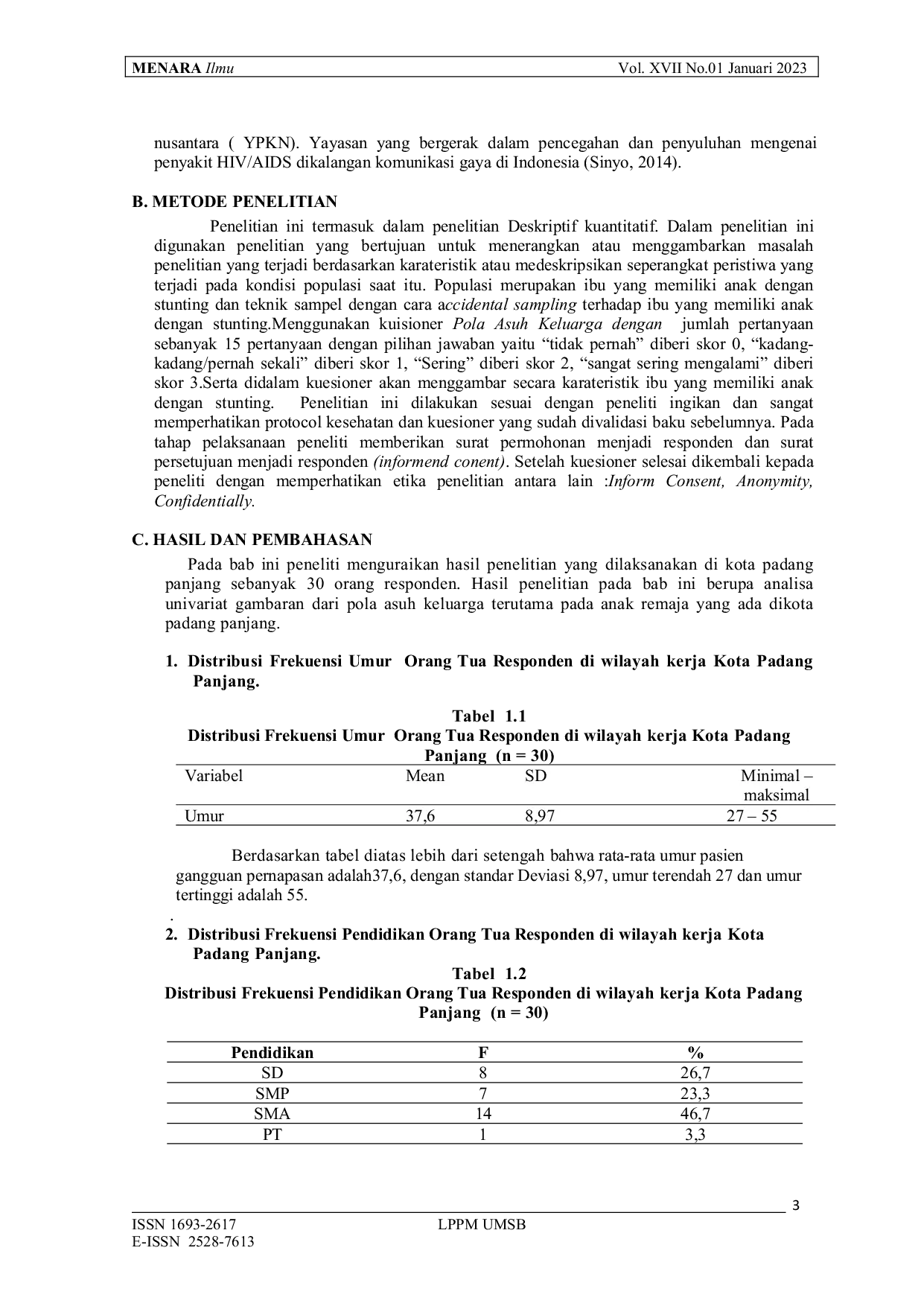

UMSBUMSB Hasil penelitian menunjukkan bahwa Kota Padang Panjang, yang dikenal sebagai Kota Serambi Mekkah dan kota pendidikan, masih menjunjung tinggi kontrol sosialHasil penelitian menunjukkan bahwa Kota Padang Panjang, yang dikenal sebagai Kota Serambi Mekkah dan kota pendidikan, masih menjunjung tinggi kontrol sosial

MKRIMKRI Pembatalan undang-undang tersebut mengembalikan prinsip pengelolaan air pada UU Nomor 11 Tahun 1974, yang mengamanatkan pengelolaan oleh pemerintah atauPembatalan undang-undang tersebut mengembalikan prinsip pengelolaan air pada UU Nomor 11 Tahun 1974, yang mengamanatkan pengelolaan oleh pemerintah atau

Useful /

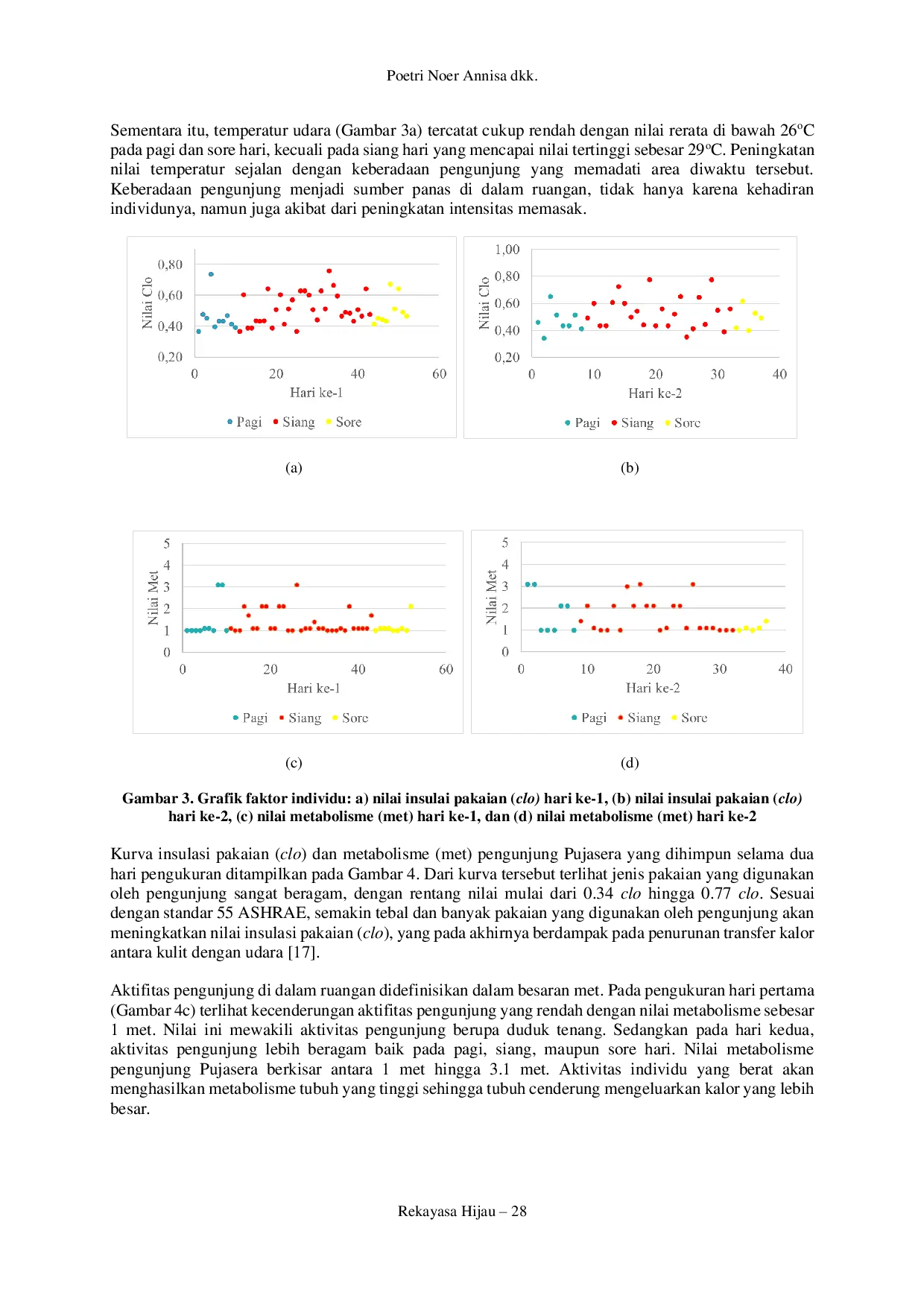

ITENASITENAS Kenyamanan termal dan kualitas udara dalam ruang merupakan faktor penting yang memberikan dampak bagi produktivitas penghuni, tidak terlepas bagi bangunanKenyamanan termal dan kualitas udara dalam ruang merupakan faktor penting yang memberikan dampak bagi produktivitas penghuni, tidak terlepas bagi bangunan

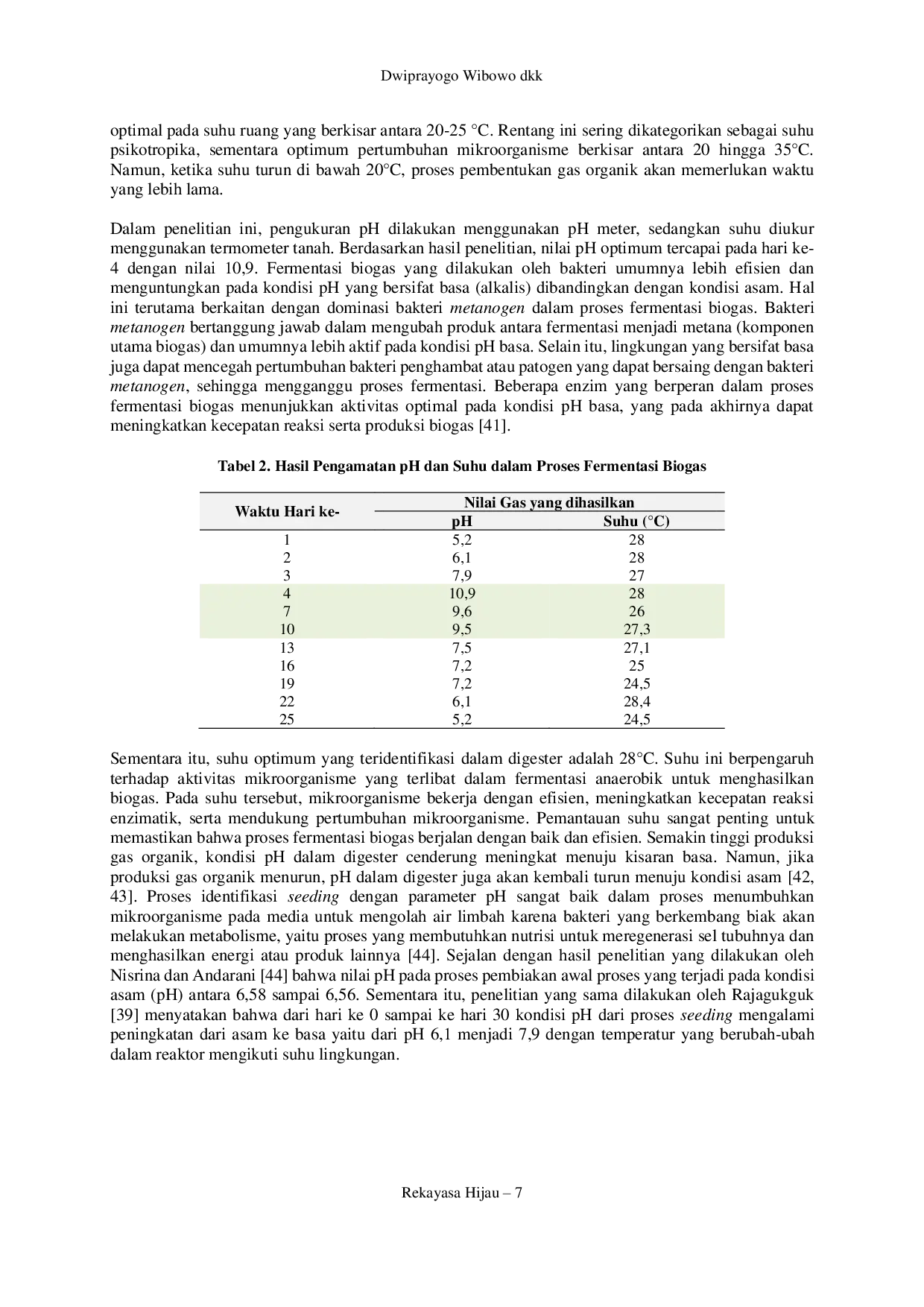

ITENASITENAS Bakteri metanogen yang dominan dalam fermentasi biogas lebih efisien pada kondisi pH basa. Kinerja fermentasi dan produksi biogas dipengaruhi oleh perubahanBakteri metanogen yang dominan dalam fermentasi biogas lebih efisien pada kondisi pH basa. Kinerja fermentasi dan produksi biogas dipengaruhi oleh perubahan

LAAROIBALAAROIBA Peneliti percaya bahwa kesehatan mental dapat memiliki hubungan positif dan pengaruh signifikan terhadap kinerja karyawan karena kesehatan mental, yangPeneliti percaya bahwa kesehatan mental dapat memiliki hubungan positif dan pengaruh signifikan terhadap kinerja karyawan karena kesehatan mental, yang

LAAROIBALAAROIBA Namun, saat ini, produk halal telah menjadi tren yang tidak hanya menjadi kebutuhan konsumen, tetapi juga menjadi kewajiban bagi produsen dalam memproduksi.Namun, saat ini, produk halal telah menjadi tren yang tidak hanya menjadi kebutuhan konsumen, tetapi juga menjadi kewajiban bagi produsen dalam memproduksi.